In the rapidly evolving field of deep learning, choosing the right framework to develop and deploy your models is crucial. Two of the most popular choices are TensorFlow and PyTorch. Both frameworks have gained substantial traction within the AI community, and they each come with their own set of features, strengths, and weaknesses. In this blog post, we’ll dive into a comprehensive comparison of TensorFlow and PyTorch to help you make an informed decision based on your project’s requirements.

What Is TensorFlow ?

Developed by Google Brain, TensorFlow is an open-source deep learning framework that was first released in 2015. It has gained widespread adoption due to its scalability and robustness, making it suitable for both research and production environments. TensorFlow offers both high-level APIs for ease of use and low-level APIs for more advanced customization.

Diving into TensorFlow’s Advantages

Google-Powered Innovation: Google is the brain behind TensorFlow, so you know it’s packed with new stuff. It’s a top pick for real-world projects because it keeps getting better.

For Everyone, by Everyone: TensorFlow is open-source, which means it’s like a gift to the whole community. Anyone can use it, and people all around the world are working together to make it even cooler.

Data Gets Fancy with TensorBoard: TensorFlow comes with a handy tool called TensorBoard. It takes boring data and turns it into colorful pictures. Plus, it helps you fix any mistakes you make when building your cool AI brain.

Keras Compatibility: TensorFlow and Keras are like best friends. Keras is part of TensorFlow, and it helps you do fancy things without too much hassle. It’s like having your own coding wizard by your side.

Big or Small, TensorFlow Handles All: Whether you’re building a little project or a massive system, TensorFlow can handle it. It’s like a superhero that can fit into any suit!

Multiple Language Support: TensorFlow is compatible with many languages, such as C++, JavaScript, Python, C#, Ruby, and Swift. This allows a user to work in an environment they are comfortable in.

Super Smart Architecture: TensorFlow isn’t just about AI brains. It can also make your computer work faster. It’s like giving your computer a turbo boost! It even has a super version called TPU that’s faster than a speeding bullet.

What Is PyTorch ?

PyTorch, an open-source deep learning framework, emerged from the collaborative efforts of the AI community under the guidance of Meta AI. Born in September 2016, PyTorch has now reached its second iteration, PyTorch 2. This dynamic framework is adept at running on both CPUs and GPUs, with the added capability of functioning on TPUs through well-crafted extensions.

A significant milestone came in September 2022 when Meta announced the transition of PyTorch’s stewardship to the PyTorch Foundation, now a part of the Linux Foundation—a hub dedicated to fostering open-source software development collaboratively.

At its core, PyTorch is built upon Torch, a scientific computing framework coded in C and CUDA (utilizing C for GPU programming). However, PyTorch’s elegance shines through its Python interface, paving the way for its meteoric rise as one of the most sought-after deep learning frameworks.

Diving into PyTorch’s Advantages

Simplicity Redefined: PyTorch adheres to the principle of simplicity, maintaining a consistent and user-friendly interface that eases the learning curve.

Unleash Your Creativity: Providing a canvas for creativity, PyTorch is remarkably flexible. It empowers developers with granular control over model architecture and training, all without delving into intricate low-level intricacies.

The Python Harmony: PyTorch seamlessly integrates with popular Python libraries such as NumPy, enhancing its versatility and user-friendliness.

What Are The Key Differences Between TensorFlow And PyTorch ?

Let us now understand some key differences between Tensorflow & PyTorch based on some important parameters.

Research Paper Implementations

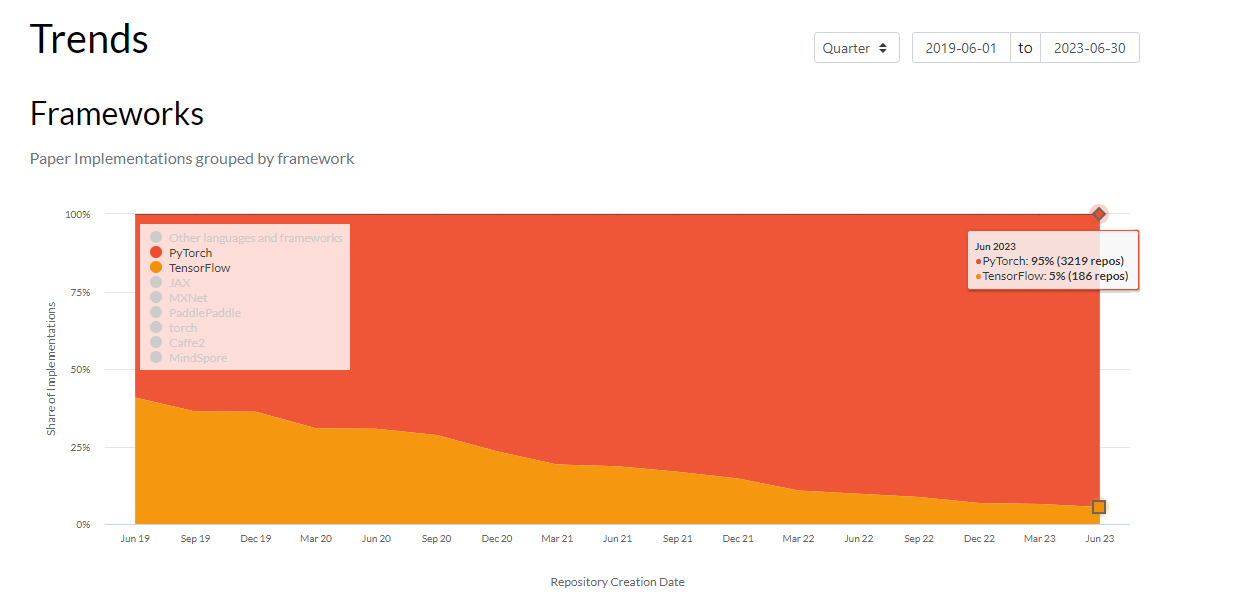

It’s evident that PyTorch is the preferred choice of the majority of researchers. Data from Paperswithcode shows that in June 2023 95% of all published papers utilize PyTorch framework compared to about 5% for TensorFlow.

Accuracy & Training Time

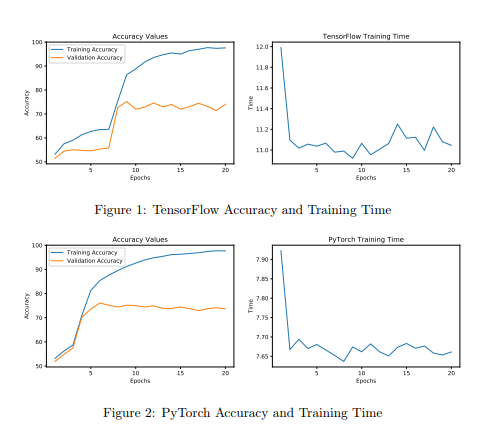

Let’s take a closer look at the accuracy and training time comparison between TensorFlow and PyTorch. The graphs illustrating accuracy reveal a striking similarity in the performance of both frameworks. As the models undergo training, their accuracy steadily climbs, a sign that they’re learning from the data they’re being exposed to.

Validation accuracy, a vital measure of how well a model learns during training, sheds light on the effectiveness of the learning process. Impressively, both TensorFlow and PyTorch models showcase an average validation accuracy of around 78% after 20 training epochs. This striking resemblance underscores the fact that both frameworks excel in implementing neural networks accurately.

When it comes to accuracy, it’s clear that TensorFlow and PyTorch are on par, consistently producing comparable results. This further reaffirms that, armed with the same model and dataset, both frameworks possess the capability to yield identical outcomes, highlighting their robustness and reliability.

In the quest for optimal performance, both TensorFlow and PyTorch stand strong, promising accurate and consistent results in a side-by-side comparison.

Performance Comparison

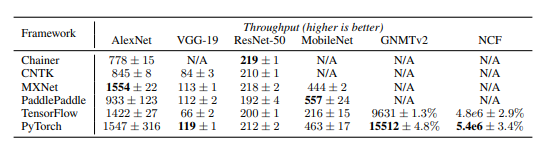

The provided table offers insights into the training speed of the two models utilizing 32-bit floating-point precision. The benchmark quantifies throughput in different units – images per second for models like AlexNet, VGG-19, ResNet-50, and MobileNet, tokens per second for the GNMTv2 model, and samples per second for the NCF model.

Notably, the benchmark data indicates that PyTorch outperforms TensorFlow in terms of training speed. This distinction can be attributed to a crucial factor: both frameworks leverage the same version of cuDNN and cuBLAS libraries, offloading a substantial portion of computations to these libraries. This strategic utilization of optimized libraries contributes significantly to the superior training speed exhibited by PyTorch.

The data showcased in the benchmark reinforces PyTorch’s efficacy in rapidly training models, making it a compelling choice for practitioners seeking efficient and accelerated training processes

Computational Graphs

TensorFlow: Primarily uses a static computational graph, where the structure of the graph is defined before the actual computations. This can be advantageous for optimization and deployment.

PyTorch: Utilizes a dynamic computational graph, allowing you to modify the graph on-the-fly. This makes it easier to debug and experiment with models but might lead to slightly slower performance during deployment.

Ease of Use

TensorFlow: Historically known for its verbosity, TensorFlow 2.x introduced a more user-friendly and intuitive API similar to PyTorch, making it more accessible to beginners.

PyTorch: Gained popularity for its Pythonic and intuitive design. Its dynamic nature allows for easier debugging and faster experimentation.

Flexibility

TensorFlow: Provides a strong ecosystem for production deployment, model optimization, and serving. It’s well-suited for large-scale distributed training and deployment in resource-intensive scenarios.

Pytorch: Offers flexibility and control over every aspect of the model, making it a preferred choice for researchers and those who need to experiment with novel architectures and ideas.

Community and Ecosystem

TensorFlow: Benefits from a large community and ecosystem, which means extensive documentation, pre-trained models, and support for a wide range of applications.

PyTorch: While its community is slightly smaller, PyTorch users often praise the framework’s more personal and supportive atmosphere. The ecosystem is rapidly growing, with a focus on research and innovation.

Visualization and Debugging

TensorFlow: Offers TensorBoard, a powerful tool for visualizing training progress and model graphs.

PyTorch: Integrates with tools like TensorBoardX and Visdom for visualization, but also allows for direct integration with Python debugging tools.

Popularity in Research vs. Industry

TensorFlow: Widely used in both research and industry, especially for large-scale applications and production deployment.

PyTorch: Initially gained popularity in academia and research due to its flexibility, but it’s increasingly being adopted in various industries as well.

Choosing Between PyTorch & TensorFlow: Making an Informed Decision

As the debate between PyTorch and TensorFlow unfolds, let’s navigate through a set of arguments that can guide your choice of framework for your project.

Final Word On Pros & Cons Of PyTorch

Pros

Research-Ready Champion: If your project leans towards research-oriented endeavors, PyTorch stands as a preferred ally. Its widespread acceptance within the AI research community positions it as a valuable asset for innovation.

Learning Curves and Design: PyTorch’s learning curve might be a bit steeper when it comes to model design, especially when compared to a hybrid Keras-TensorFlow approach. However, this curve can be a catalyst for deep understanding and creativity in your designs.

A Rising Star: PyTorch’s momentum is undeniable. It’s not just trending—it’s actively gaining more attention and traction, fueling its evolution and adoption.

Learning Institutions Embrace PyTorch: Prestigious academic institutions have embraced PyTorch as a teaching tool. Its presence in educational settings underscores its value as a tool for nurturing AI talents.

Cons

Production-Ready Tooling: PyTorch might not be as comprehensive as TensorFlow when it comes to production-ready tools for end-to-end projects. TensorFlow’s robust ecosystem offers an edge here.

Final Word On Pros & Cons Of TensorFlow

Pros

A Full-Fledged Production Ecosystem: TensorFlow shines in the realm of production. With tools like TensorFlow Serving, TFLite, and TFX, along with support for multiple languages, it provides a complete ecosystem for production deployments.

Keras: Speed and Experimentation: The integration of Keras within TensorFlow empowers rapid experimentation. This combination of power and agility can accelerate your model development.

MLOps Prowess with TFX: TensorFlow’s TFX enables the seamless construction of MLOps pipelines, streamlining the deployment and management of machine learning models.

Cons

Research Community Scale: TensorFlow’s research community might be relatively smaller, potentially limiting the range of collaborative research endeavors.

HuggingFace Compatibility: Some Transformer models might not be as compatible on HuggingFace, a consideration for projects in this domain.

In the end, the choice between PyTorch and TensorFlow boils down to the nature of your project, your familiarity with the frameworks, and the specific tools and ecosystem that best align with your project goals. With a clearer understanding of the pros and cons, you’re well-equipped to make an informed decision that sets you on the path to AI success.

Conclusion

In the TensorFlow vs. PyTorch debate, there’s no definitive winner—it all boils down to your specific needs. If you’re looking for a powerful framework that’s well-suited for production deployment, distributed computing, and large-scale applications, TensorFlow might be your choice. On the other hand, if you prioritize flexibility, ease of use, and the ability to experiment quickly, PyTorch could be the better fit.

Ultimately, both frameworks have contributed significantly to the advancement of deep learning and have their own passionate user bases. Regardless of your choice, the important thing is to leverage the strengths of the framework to create innovative and impactful AI solutions.

Add comment