Serverless Concepts

Introduction

As a cloud-native development methodology, serverless allows developers to focus on building their applications rather than managing the underlying servers. Code written and executed, but its location is unknown. Provisioning, maintaining, and scaling up server infrastructure are all tasks that are routinely handled by a cloud provider. For deployment, developers need to just bundle their code into containers.

Serverless programmes, once launched, may grow horizontally or vertically in response to fluctuating demand. In general, public cloud providers’ serverless products are event-driven and metered on-demand. Therefore, there is no cost associated with a serverless function that is currently not being used.

In this blog, we will cover the architecture and important concepts in Serverless.

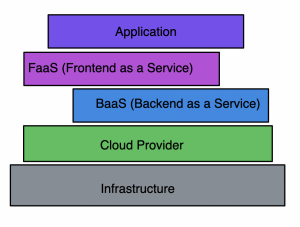

Serverless Architecture

In order for users to interact with and have access to a program’s business logic, servers must be set up and managed. Teams are responsible for keeping the servers up to date with software and security patches and creating backups in case of disaster. Serverless computing frees up developers to concentrate on creating useful software rather than maintaining servers.

With a serverless architecture, your team can stop worrying about cloud or on-premises server management and concentrate on building a better product. As a result of this decreased overhead, developers are free to focus their efforts where they are most needed: on creating scalable, trustworthy solutions.

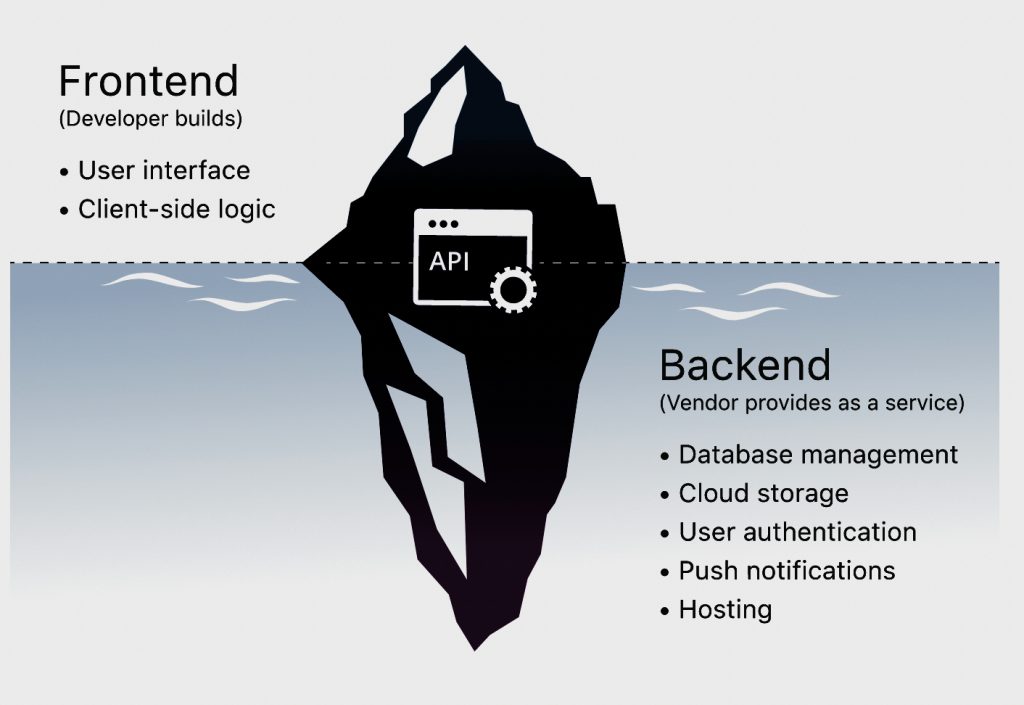

Backend as a Service

BaaS is a cloud service model where server side logic and state are hosted by a cloud provider and utilized by client applications running via a web browser or mobile interface. This was exclusively for mobile interface during its inception but has not been adopted to web interfaces too.

Suppose you’re directing a movie. In addition to shooting and directing sequences, a film director manages camera crews, lighting, set building, clothing, actor casting, and the production timeline. Imagine a service that handled all behind-the-scenes tasks so the director just had to direct and film the sequence. The vendor handles the ‘lighting’ and ‘camera’ (server-side* functions) so the director (the developer) may concentrate on the ‘action’ — what the end user sees and experiences.

For what reason are we debating BaaS? BaaS, then, is the groundwork for what serverless eventually becomes. As far as I understand it, BaaS requires no servers at all. You don’t have to worry about the Database’s location or its available resources when you make use of an API to connect to it. That’s actually the principle of serverless.

Frontend as a Service (FaaS)

Frontend as a service is a cloud service model where business logic is processed by event-triggered containers that are ephemeral in nature. FaaS differs from BaaS in a way that you provide your own code to be executed in the cloud by event-triggered containers that are dynamically allocated and ephemeral in nature. FaaS is a kind of cloud computing that, in contrast to BaaS, gives developers more leeway to exercise control over the final product by requiring them to build their own applications rather than selecting from a collection of pre-existing services.

A cloud service provider handles the deployment of code into containerized environments. This set of containers consists of:

- Stateless data makes it easier to integrate systems.

- Ephemeral, therefore they can only be used for a certain amount of time.

- Automatically activated in response to a predetermined event(trigger).

- A cloud service takes care of everything, so you only have to pay for the resources you use rather than for software and hardware that is constantly running.

Component Breakdowns

Event-Triggered

You do not fire up the application rather you wait for a request. Your application only exists when it’s triggered. The trigger is the event that you define.

Containers

There is no server running your code, but when the event is triggered, a generic container runs your code, which serves the request.

Dynamic

There is no capacity requirement that you need to specify. As more requests come, Cloud providers are gonna spin up more containers.

Ephemeral

FaaS lasts only a short period of time and is killed as soon as the request is served.

Keywords in Serverless Architecture

The learning curve for serverless architecture is severe, particularly when chaining numerous functions together to form complicated processes in an application, despite the fact that it removes the need for server maintenance. Therefore, it may be useful to get acquainted with the following serverless terms:

Invocation

A single function execution

Duration

The time it takes for a serverless function to execute

Cold Start

The latency that occurs when a function is triggered for the first time or after a period of inactivity

Concurrency Limit

Cloud service provider-imposed limits on the total number of instanced functions that may be deployed inside a single region. If a process goes beyond this threshold, it will be slowed down.

Timeout

A cloud service serverless’s maximum execution duration before killing a function. There is often both a minimum and maximum timeout defined by the supplier.

Multi-tier Architecture VS Serverless

| Serverless | Multi-tier | |

| Skill Set | Development Only: Cloud provider handles the infrastructure, which reduces the skilled staff required. | Requires Operations: The infrastructure must be managed by someone. |

| Costs | Low Startup Costs | The cost is high as infrastructure has to run all the time |

| Use Cases | Sporadic Traffic applications | Regular Traffic |

Serverless Architecture vs. Container Architecture

The ability to deploy application code while abstracting away the host environment is shared by serverless architectures and container designs; nonetheless, there are significant distinctions between the two types of architectures. Developers who are using container architecture, for instance, are required to update and maintain each container that they deploy, in addition to the system settings and requirements that are associated with it. On the other side, server maintenance is not required in serverless designs since the cloud provider takes care of it altogether. In addition, serverless applications grow on their own, but scaling container designs calls for the use of an orchestration platform such as Kubernetes.

Containers provide developers with control over the underlying operating system and runtime environment, which makes them excellent for use with applications that get a continuous high volume of traffic or as the first stage in a move to the cloud. On the other hand, serverless functions are more appropriate for events that are triggered by certain conditions, such as the processing of payments.

Serverless Benefits

- Frees up infrastructure from additional strain Cloud service providers are responsible for managing the servers and the infrastructure associated with them.

- Built-in scaling means that more computing power is automatically provided when it is required and removed when it is no longer required.

- Cost Savings During Operations Because there is no need to worry about maintaining the infrastructure, there will be cost savings during operations.

- Deployments are truly environment agnostic.

Serverless Drawbacks

Loss of Control

Serverless setups lack control over your code’s software stack. If a server malfunctions, the cloud provider fixes it.

Security

A cloud provider may execute customer code on the same server. Your application data might be exposed if the shared server is misconfigured.

Performance Impact

Cold starts are common in serverless environments, adding several seconds to code execution after inactivity.

Testing

In a serverless environment, developers can run unit tests on function code, but integration tests are difficult.

Vendor Lock-In

Large cloud providers like AWS provide databases, communications queues, and APIs to operate serverless apps. Although you may mix and match parts from multiple providers, single-vendor services integrate most smoothly.

Cloud Serverless Offerings

AWS Lambda

FaaS service Lambda is the most crucial service in AWS’s serverless service catalog. With AWS Lambda, we can execute our code without having to set up or manage servers. It enables us to create Lambda functions in languages such as Node.js, Python, Go, and Java. A container image or ZIP file is all that is required to submit our code.

This means we have access to reliable computing resources regardless of load. Our serverless application may also take use of the many other services offered by AWS. For instance, the event-driven apps may be built using Amazon EventBridge. Our APIs are developed, published, maintained, monitored, and secured using Amazon’s API Gateway. You can use Amazon S3 to reliably and flexibly store any quantity of data.

Azure Functions

Azure Functions, a FaaS service offered by Microsoft Azure, is a serverless computing platform that responds to events. Similarly, the Durable Functions extension allows us to resolve difficult orchestration challenges, such as stateful coordination. Using triggers and bindings, we can establish connections to a wide variety of external services without having to write any custom code. Not only that, but we can also deploy our Functions to Kubernetes and have them execute there.

Just as previously, Azure now has App Service for even the most sophisticated programmes. It provides a serverless platform on which we may construct, release, and grow web applications. Any widely-used language, such as Node.js, Java, or Python, may be used to build our programme. Additionally, we are able to fulfill the stringent enterprise-level performance, security, and compliance standards.

Google Cloud functions

In a similar vein, Cloud Functions is the primary serverless option within GCP’s portfolio of services. A scalable, pay-as-you-go FaaS, Cloud Functions allows us to execute our programmes without worrying about maintaining any servers. Our app’s scalability is dynamically adjusted to the current demand. As an added bonus, it has built-in support for tracking, logging, and troubleshooting. In addition, it has function- and role-based security integrated right in.

GCP’s App Engine is a significant PaaS service. Simple, independent, and event-driven functions are better suited to Cloud Functions’ environment. However, App Engine is the superior serverless platform for more sophisticated apps that need to incorporate several features. Cloud Run is another GCP service that may be used to deploy serverless apps in a containerized form on a Kubernetes cluster similar to GKE.

Conclusion

Here, we covered the groundwork for understanding serverless architecture. To reap the benefits of serverlessness, we realized how to modify a conventional programme. In addition,we also discussed the drawbacks of serverless design. Finally, we investigated some well-known serverless options.

Add comment